StereoDepth¶

StereoDepth node calculates the disparity and/or depth from the stereo camera pair (2x MonoCamera/ColorCamera). We suggest following Configuring Stereo Depth tutorial to achieve the best depth results.

How to place it¶

pipeline = dai.Pipeline()

stereo = pipeline.create(dai.node.StereoDepth)

dai::Pipeline pipeline;

auto stereo = pipeline.create<dai::node::StereoDepth>();

Inputs and Outputs¶

┌───────────────────┐

│ │ confidenceMap

│ ├─────────────►

│ │rectifiedLeft

│ ├─────────────►

left │ │ syncedLeft

──────────────►│-------------------├─────────────►

│ │ depth [mm]

│ ├─────────────►

│ StereoDepth │ disparity

│ ├─────────────►

right │ │ syncedRight

──────────────►│-------------------├─────────────►

│ │rectifiedRight

│ ├─────────────►

inputConfig │ | outConfig

──────────────►│-------------------├─────────────►

└───────────────────┘

left- ImgFrame from the left stereo cameraright- ImgFrame from the right stereo camerainputConfig- StereoDepthConfig

Internal block diagram of StereoDepth node¶

On the diagram, red rectangle are firmware settings that are configurable via the API. Gray rectangles are settings that that are not yet exposed to the API. We plan on exposing as much configurability as possible, but please inform us if you would like to see these settings configurable sooner.

If you click on the image, you will be redirected to the webapp. Some blocks have notes that provide additional technical information.

Currently configurable blocks¶

Left-Right Check or LR-Check is used to remove incorrectly calculated disparity pixels due to occlusions at object borders (Left and Right camera views are slightly different).

Computes disparity by matching in R->L direction

Computes disparity by matching in L->R direction

Combines results from 1 and 2, running on Shave: each pixel d = disparity_LR(x,y) is compared with disparity_RL(x-d,y). If the difference is above a threshold, the pixel at (x,y) in the final disparity map is invalidated.

You can use debugDispLrCheckIt1 and debugDispLrCheckIt2 debug outputs for debugging/fine-tuning purposes.

Extended disparity mode allows detecting closer distance objects for the given baseline. This increases the maximum disparity search from 96 to 191, meaning the range is now: [0..190].

So this cuts the minimum perceivable distance in half, given that the minimum distance is now focal_length * base_line_dist / 190 instead

of focal_length * base_line_dist / 95.

Computes disparity on the original size images (e.g. 1280x720)

Computes disparity on 2x downscaled images (e.g. 640x360)

Combines the two level disparities on Shave, effectively covering a total disparity range of 191 pixels (in relation to the original resolution).

You can use debugExtDispLrCheckIt1 and debugExtDispLrCheckIt2 debug outputs for debugging/fine-tuning purposes.

Subpixel mode improves the precision and is especially useful for long range measurements. It also helps for better estimating surface normals.

Besides the integer disparity output, the Stereo engine is programmed to dump to memory the cost volume, that is 96 levels (disparities) per pixel,

then software interpolation is done on Shave, resulting a final disparity with 3 fractional bits, resulting in significantly more granular depth

steps (8 additional steps between the integer-pixel depth steps), and also theoretically, longer-distance depth viewing - as the maximum depth

is no longer limited by a feature being a full integer pixel-step apart, but rather 1/8 of a pixel. In this mode, stereo cameras perform: 94 depth steps * 8 subpixel depth steps + 2 (min/max values) = 754 depth steps

For comparison of normal disparity vs. subpixel disparity images, click here.

Depth Filtering / Depth Post-Processing is performed at the end of the depth pipeline. It helps with noise reduction and overall depth quality.

This is a non-edge preserving Median filter, which can be used to reduce noise and smoothen the depth map. Median filter is implemented in hardware, so it’s the fastest filter.

-

enum

dai::MedianFilter Median filter config

Values:

-

enumerator

MEDIAN_OFF

-

enumerator

KERNEL_3x3

-

enumerator

KERNEL_5x5

-

enumerator

KERNEL_7x7

-

enumerator

Speckle Filter is used to reduce the speckle noise. Speckle noise is a region with huge variance between neighboring disparity/depth pixels, and speckle filter tries to filter this region.

-

struct

dai::RawStereoDepthConfig::PostProcessing::SpeckleFilter Speckle filtering. Removes speckle noise.

Public Members

-

bool

enable= false Whether to enable or disable the filter.

-

std::uint32_t

speckleRange= 50 Speckle search range.

-

bool

Temporal Filter is intended to improve the depth data persistency by manipulating per-pixel values based on previous frames. The filter performs a single pass on the data, adjusting the depth values while also updating the tracking history. In cases where the pixel data is missing or invalid, the filter uses a user-defined persistency mode to decide whether the missing value should be rectified with stored data. Note that due to its reliance on historic data the filter may introduce visible blurring/smearing artifacts, and therefore is best-suited for static scenes.

-

struct

dai::RawStereoDepthConfig::PostProcessing::TemporalFilter Temporal filtering with optional persistence.

Public Types

-

enum

PersistencyMode Persistency algorithm type.

Values:

-

enumerator

PERSISTENCY_OFF

-

enumerator

VALID_8_OUT_OF_8

-

enumerator

VALID_2_IN_LAST_3

-

enumerator

VALID_2_IN_LAST_4

-

enumerator

VALID_2_OUT_OF_8

-

enumerator

VALID_1_IN_LAST_2

-

enumerator

VALID_1_IN_LAST_5

-

enumerator

VALID_1_IN_LAST_8

-

enumerator

PERSISTENCY_INDEFINITELY

-

enumerator

Public Members

-

bool

enable= false Whether to enable or disable the filter.

-

PersistencyMode

persistencyMode= PersistencyMode::VALID_2_IN_LAST_4 Persistency mode. If the current disparity/depth value is invalid, it will be replaced by an older value, based on persistency mode.

-

float

alpha= 0.4f The Alpha factor in an exponential moving average with Alpha=1 - no filter. Alpha = 0 - infinite filter. Determines the extent of the temporal history that should be averaged.

-

std::int32_t

delta= 0 Step-size boundary. Establishes the threshold used to preserve surfaces (edges). If the disparity value between neighboring pixels exceed the disparity threshold set by this delta parameter, then filtering will be temporarily disabled. Default value 0 means auto: 3 disparity integer levels. In case of subpixel mode it’s 3*number of subpixel levels.

-

enum

Spatial Edge-Preserving Filter will fill invalid depth pixels with valid neighboring depth pixels. It performs a series of 1D horizontal and vertical passes or iterations, to enhance the smoothness of the reconstructed data. It is based on this research paper.

-

struct

dai::RawStereoDepthConfig::PostProcessing::SpatialFilter 1D edge-preserving spatial filter using high-order domain transform.

Public Members

-

bool

enable= false Whether to enable or disable the filter.

-

std::uint8_t

holeFillingRadius= 2 An in-place heuristic symmetric hole-filling mode applied horizontally during the filter passes. Intended to rectify minor artefacts with minimal performance impact. Search radius for hole filling.

-

float

alpha= 0.5f The Alpha factor in an exponential moving average with Alpha=1 - no filter. Alpha = 0 - infinite filter. Determines the amount of smoothing.

-

std::int32_t

delta= 0 Step-size boundary. Establishes the threshold used to preserve “edges”. If the disparity value between neighboring pixels exceed the disparity threshold set by this delta parameter, then filtering will be temporarily disabled. Default value 0 means auto: 3 disparity integer levels. In case of subpixel mode it’s 3*number of subpixel levels.

-

std::int32_t

numIterations= 1 Number of iterations over the image in both horizontal and vertical direction.

-

bool

Threshold Filter filters out all disparity/depth pixels outside the configured min/max threshold values.

-

class

depthai.RawStereoDepthConfig.PostProcessing.ThresholdFilter

Decimation Filter will sub-samples the depth map, which means it reduces the depth scene complexity and allows

other filters to run faster. Setting decimationFactor to 2 will downscale 1280x800 depth map to 640x400.

-

struct

dai::RawStereoDepthConfig::PostProcessing::DecimationFilter Decimation filter. Reduces the depth scene complexity. The filter runs on kernel sizes [2x2] to [8x8] pixels.

Public Types

-

enum

DecimationMode Decimation algorithm type.

Values:

-

enumerator

PIXEL_SKIPPING

-

enumerator

NON_ZERO_MEDIAN

-

enumerator

NON_ZERO_MEAN

-

enumerator

Public Members

-

std::uint32_t

decimationFactor= 1 Decimation factor. Valid values are 1,2,3,4. Disparity/depth map x/y resolution will be decimated with this value.

-

DecimationMode

decimationMode= DecimationMode::PIXEL_SKIPPING Decimation algorithm type.

-

enum

Mesh files (homography matrix) are generated using the camera intrinsics, distortion coeffs, and rectification rotations. These files helps in overcoming the distortions in the camera increasing the accuracy and also help in when wide FOV lens are used.

Note

Currently mesh files are generated only for stereo cameras on the host during calibration. The generated mesh files are stored in depthai/resources which users can load to the device. This process will be moved to on device in the upcoming releases.

-

void

dai::node::StereoDepth::loadMeshFiles(const dai::Path &pathLeft, const dai::Path &pathRight) Specify local filesystem paths to the mesh calibration files for ‘left’ and ‘right’ inputs.

When a mesh calibration is set, it overrides the camera intrinsics/extrinsics matrices. Overrides useHomographyRectification behavior. Mesh format: a sequence of (y,x) points as ‘float’ with coordinates from the input image to be mapped in the output. The mesh can be subsampled, configured by

setMeshStep.With a 1280x800 resolution and the default (16,16) step, the required mesh size is:

width: 1280 / 16 + 1 = 81

height: 800 / 16 + 1 = 51

-

void

dai::node::StereoDepth::loadMeshData(const std::vector<std::uint8_t> &dataLeft, const std::vector<std::uint8_t> &dataRight) Specify mesh calibration data for ‘left’ and ‘right’ inputs, as vectors of bytes. Overrides useHomographyRectification behavior. See

loadMeshFilesfor the expected data format

-

void

dai::node::StereoDepth::setMeshStep(int width, int height) Set the distance between mesh points. Default: (16, 16)

Confidence threshold: Stereo depth algorithm searches for the matching feature from right camera point to the left image (along the 96 disparity levels). During this process it computes the cost for each disparity level and chooses the minimal cost between two disparities and uses it to compute the confidence at each pixel. Stereo node will output disparity/depth pixels only where depth confidence is below the confidence threshold (lower the confidence value means better depth accuracy).

LR check threshold: Disparity is considered for the output when the difference between LR and RL disparities is smaller than the LR check threshold.

-

StereoDepthConfig &

dai::StereoDepthConfig::setConfidenceThreshold(int confThr) Confidence threshold for disparity calculation

- Parameters

confThr: Confidence threshold value 0..255

-

StereoDepthConfig &

dai::StereoDepthConfig::setLeftRightCheckThreshold(int threshold) - Parameters

threshold: Set threshold for left-right, right-left disparity map combine, 0..255

Limitations¶

Median filtering is disabled when subpixel mode is set to 4 or 5 bits.

For RGB-depth alignment the RGB camera has to be placed on the same horizontal line as the stereo camera pair.

RGB-depth alignment doesn’t work when using disparity shift.

Stereo depth FPS¶

Stereo depth mode |

FPS for 1280x720 |

FPS for 640x400 |

|---|---|---|

Standard mode |

60 |

110 |

Left-Right Check |

55 |

105 |

Subpixel Disparity |

45 |

105 |

Extended Disparity |

54 |

105 |

Subpixel + LR check |

34 |

96 |

Extended + LR check |

26 |

62 |

All stereo modes were measured for depth output with 5x5 median filter enabled. For 720P, mono cameras were set

to 60 FPS and for 400P mono cameras were set to 110 FPS.

Usage¶

pipeline = dai.Pipeline()

stereo = pipeline.create(dai.node.StereoDepth)

# Better handling for occlusions:

stereo.setLeftRightCheck(False)

# Closer-in minimum depth, disparity range is doubled:

stereo.setExtendedDisparity(False)

# Better accuracy for longer distance, fractional disparity 32-levels:

stereo.setSubpixel(False)

# Define and configure MonoCamera nodes beforehand

left.out.link(stereo.left)

right.out.link(stereo.right)

dai::Pipeline pipeline;

auto stereo = pipeline.create<dai::node::StereoDepth>();

// Better handling for occlusions:

stereo->setLeftRightCheck(false);

// Closer-in minimum depth, disparity range is doubled:

stereo->setExtendedDisparity(false);

// Better accuracy for longer distance, fractional disparity 32-levels:

stereo->setSubpixel(false);

// Define and configure MonoCamera nodes beforehand

left->out.link(stereo->left);

right->out.link(stereo->right);

Examples of functionality¶

RGB Depth alignment - align depth to color camera

Reference¶

-

class

depthai.node.StereoDepth -

class

Id Node identificator. Unique for every node on a single Pipeline

-

class

PresetMode Members:

HIGH_ACCURACY

HIGH_DENSITY

-

property

name

-

property

-

enableDistortionCorrection(self: depthai.node.StereoDepth, arg0: bool) → None

-

getAssetManager(*args, **kwargs) Overloaded function.

getAssetManager(self: depthai.Node) -> depthai.AssetManager

getAssetManager(self: depthai.Node) -> depthai.AssetManager

-

getInputRefs(*args, **kwargs) Overloaded function.

getInputRefs(self: depthai.Node) -> list[depthai.Node.Input]

getInputRefs(self: depthai.Node) -> list[depthai.Node.Input]

-

getInputs(self: depthai.Node) → list[depthai.Node.Input]

-

getMaxDisparity(self: depthai.node.StereoDepth) → float

-

getName(self: depthai.Node) → str

-

getOutputRefs(*args, **kwargs) Overloaded function.

getOutputRefs(self: depthai.Node) -> list[depthai.Node.Output]

getOutputRefs(self: depthai.Node) -> list[depthai.Node.Output]

-

getOutputs(self: depthai.Node) → list[depthai.Node.Output]

-

getParentPipeline(*args, **kwargs) Overloaded function.

getParentPipeline(self: depthai.Node) -> depthai.Pipeline

getParentPipeline(self: depthai.Node) -> depthai.Pipeline

-

loadMeshFiles(self: depthai.node.StereoDepth, pathLeft: Path, pathRight: Path) → None

-

setAlphaScaling(self: depthai.node.StereoDepth, arg0: float) → None

-

setBaseline(self: depthai.node.StereoDepth, arg0: float) → None

-

setConfidenceThreshold(self: depthai.node.StereoDepth, arg0: int) → None

-

setDefaultProfilePreset(self: depthai.node.StereoDepth, arg0: depthai.node.StereoDepth.PresetMode) → None

-

setDepthAlign(*args, **kwargs) Overloaded function.

setDepthAlign(self: depthai.node.StereoDepth, align: depthai.RawStereoDepthConfig.AlgorithmControl.DepthAlign) -> None

setDepthAlign(self: depthai.node.StereoDepth, camera: depthai.CameraBoardSocket) -> None

-

setDepthAlignmentUseSpecTranslation(self: depthai.node.StereoDepth, arg0: bool) → None

-

setDisparityToDepthUseSpecTranslation(self: depthai.node.StereoDepth, arg0: bool) → None

-

setEmptyCalibration(self: depthai.node.StereoDepth) → None

-

setExtendedDisparity(self: depthai.node.StereoDepth, enable: bool) → None

-

setFocalLength(self: depthai.node.StereoDepth, arg0: float) → None

-

setFocalLengthFromCalibration(self: depthai.node.StereoDepth, arg0: bool) → None

-

setInputResolution(*args, **kwargs) Overloaded function.

setInputResolution(self: depthai.node.StereoDepth, width: int, height: int) -> None

setInputResolution(self: depthai.node.StereoDepth, resolution: tuple[int, int]) -> None

-

setLeftRightCheck(self: depthai.node.StereoDepth, enable: bool) → None

-

setMedianFilter(self: depthai.node.StereoDepth, arg0: depthai.MedianFilter) → None

-

setMeshStep(self: depthai.node.StereoDepth, width: int, height: int) → None

-

setNumFramesPool(self: depthai.node.StereoDepth, arg0: int) → None

-

setOutputDepth(self: depthai.node.StereoDepth, arg0: bool) → None

-

setOutputKeepAspectRatio(self: depthai.node.StereoDepth, keep: bool) → None

-

setOutputRectified(self: depthai.node.StereoDepth, arg0: bool) → None

-

setOutputSize(self: depthai.node.StereoDepth, width: int, height: int) → None

-

setPostProcessingHardwareResources(self: depthai.node.StereoDepth, arg0: int, arg1: int) → None

-

setRectification(self: depthai.node.StereoDepth, enable: bool) → None

-

setRectificationUseSpecTranslation(self: depthai.node.StereoDepth, arg0: bool) → None

-

setRectifyEdgeFillColor(self: depthai.node.StereoDepth, color: int) → None

-

setRectifyMirrorFrame(self: depthai.node.StereoDepth, arg0: bool) → None

-

setRuntimeModeSwitch(self: depthai.node.StereoDepth, arg0: bool) → None

-

setSubpixel(self: depthai.node.StereoDepth, enable: bool) → None

-

setSubpixelFractionalBits(self: depthai.node.StereoDepth, subpixelFractionalBits: int) → None

-

useHomographyRectification(self: depthai.node.StereoDepth, arg0: bool) → None

-

class

-

class

dai::node::StereoDepth: public dai::NodeCRTP<Node, StereoDepth, StereoDepthProperties>¶ StereoDepth node. Compute stereo disparity and depth from left-right image pair.

Public Types

Public Functions

-

void

setEmptyCalibration()¶ Specify that a passthrough/dummy calibration should be used, when input frames are already rectified (e.g. sourced from recordings on the host)

-

void

loadMeshFiles(const dai::Path &pathLeft, const dai::Path &pathRight) Specify local filesystem paths to the mesh calibration files for ‘left’ and ‘right’ inputs.

When a mesh calibration is set, it overrides the camera intrinsics/extrinsics matrices. Overrides useHomographyRectification behavior. Mesh format: a sequence of (y,x) points as ‘float’ with coordinates from the input image to be mapped in the output. The mesh can be subsampled, configured by

setMeshStep.With a 1280x800 resolution and the default (16,16) step, the required mesh size is:

width: 1280 / 16 + 1 = 81

height: 800 / 16 + 1 = 51

-

void

loadMeshData(const std::vector<std::uint8_t> &dataLeft, const std::vector<std::uint8_t> &dataRight) Specify mesh calibration data for ‘left’ and ‘right’ inputs, as vectors of bytes. Overrides useHomographyRectification behavior. See

loadMeshFilesfor the expected data format

-

void

setMeshStep(int width, int height) Set the distance between mesh points. Default: (16, 16)

-

void

setInputResolution(int width, int height)¶ Specify input resolution size

Optional if MonoCamera exists, otherwise necessary

-

void

setInputResolution(std::tuple<int, int> resolution)¶ Specify input resolution size

Optional if MonoCamera exists, otherwise necessary

-

void

setOutputSize(int width, int height)¶ Specify disparity/depth output resolution size, implemented by scaling.

Currently only applicable when aligning to RGB camera

-

void

setOutputKeepAspectRatio(bool keep)¶ Specifies whether the frames resized by

setOutputSizeshould preserve aspect ratio, with potential cropping when enabled. Defaulttrue

-

void

setMedianFilter(dai::MedianFilter median)¶ - Parameters

median: Set kernel size for disparity/depth median filtering, or disable

-

void

setDepthAlign(Properties::DepthAlign align)¶ - Parameters

align: Set the disparity/depth alignment: centered (between the ‘left’ and ‘right’ inputs), or from the perspective of a rectified output stream

-

void

setDepthAlign(CameraBoardSocket camera)¶ - Parameters

camera: Set the camera from whose perspective the disparity/depth will be aligned

-

void

setConfidenceThreshold(int confThr)¶ Confidence threshold for disparity calculation

- Parameters

confThr: Confidence threshold value 0..255

-

void

setRectification(bool enable)¶ Rectify input images or not.

-

void

setLeftRightCheck(bool enable)¶ Computes and combines disparities in both L-R and R-L directions, and combine them.

For better occlusion handling, discarding invalid disparity values

-

void

setSubpixel(bool enable)¶ Computes disparity with sub-pixel interpolation (3 fractional bits by default).

Suitable for long range. Currently incompatible with extended disparity

-

void

setSubpixelFractionalBits(int subpixelFractionalBits)¶ Number of fractional bits for subpixel mode. Default value: 3. Valid values: 3,4,5. Defines the number of fractional disparities: 2^x. Median filter postprocessing is supported only for 3 fractional bits.

-

void

setExtendedDisparity(bool enable)¶ Disparity range increased from 0-95 to 0-190, combined from full resolution and downscaled images.

Suitable for short range objects. Currently incompatible with sub-pixel disparity

-

void

setRectifyEdgeFillColor(int color)¶ Fill color for missing data at frame edges

- Parameters

color: Grayscale 0..255, or -1 to replicate pixels

-

void

setRectifyMirrorFrame(bool enable)¶ DEPRECATED function. It was removed, since rectified images are not flipped anymore. Mirror rectified frames, only when LR-check mode is disabled. Default

true. The mirroring is required to have a normal non-mirrored disparity/depth output.A side effect of this option is disparity alignment to the perspective of left or right input:

false: mapped to left and mirrored,true: mapped to right. With LR-check enabled, this option is ignored, none of the outputs are mirrored, and disparity is mapped to right.- Parameters

enable: True for normal disparity/depth, otherwise mirrored

-

void

setOutputRectified(bool enable)¶ Enable outputting rectified frames. Optimizes computation on device side when disabled. DEPRECATED. The outputs are auto-enabled if used

-

void

setOutputDepth(bool enable)¶ Enable outputting ‘depth’ stream (converted from disparity). In certain configurations, this will disable ‘disparity’ stream. DEPRECATED. The output is auto-enabled if used

-

void

setRuntimeModeSwitch(bool enable)¶ Enable runtime stereo mode switch, e.g. from standard to LR-check. Note: when enabled resources allocated for worst case to enable switching to any mode.

-

void

setNumFramesPool(int numFramesPool)¶ Specify number of frames in pool.

- Parameters

numFramesPool: How many frames should the pool have

-

float

getMaxDisparity() const¶ Useful for normalization of the disparity map.

- Return

Maximum disparity value that the node can return

-

void

setPostProcessingHardwareResources(int numShaves, int numMemorySlices)¶ Specify allocated hardware resources for stereo depth. Suitable only to increase post processing runtime.

- Parameters

numShaves: Number of shaves.numMemorySlices: Number of memory slices.

-

void

setDefaultProfilePreset(PresetMode mode)¶ Sets a default preset based on specified option.

- Parameters

mode: Stereo depth preset mode

-

void

setFocalLengthFromCalibration(bool focalLengthFromCalibration)¶ Whether to use focal length from calibration intrinsics or calculate based on calibration FOV. Default value is true.

-

void

useHomographyRectification(bool useHomographyRectification)¶ Use 3x3 homography matrix for stereo rectification instead of sparse mesh generated on device. Default behaviour is AUTO, for lenses with FOV over 85 degrees sparse mesh is used, otherwise 3x3 homography. If custom mesh data is provided through loadMeshData or loadMeshFiles this option is ignored.

- Parameters

useHomographyRectification: true: 3x3 homography matrix generated from calibration data is used for stereo rectification, can’t correct lens distortion. false: sparse mesh is generated on-device from calibration data with mesh step specified with setMeshStep (Default: (16, 16)), can correct lens distortion. Implementation for generating the mesh is same as opencv’s initUndistortRectifyMap function. Only the first 8 distortion coefficients are used from calibration data.

-

void

enableDistortionCorrection(bool enableDistortionCorrection)¶ Equivalent to useHomographyRectification(!enableDistortionCorrection)

-

void

setBaseline(float baseline)¶ Override baseline from calibration. Used only in disparity to depth conversion. Units are centimeters.

-

void

setFocalLength(float focalLength)¶ Override focal length from calibration. Used only in disparity to depth conversion. Units are pixels.

-

void

setDisparityToDepthUseSpecTranslation(bool specTranslation)¶ Use baseline information for disparity to depth conversion from specs (design data) or from calibration. Default: true

-

void

setRectificationUseSpecTranslation(bool specTranslation)¶ Obtain rectification matrices using spec translation (design data) or from calibration in calculations. Should be used only for debugging. Default: false

-

void

setDepthAlignmentUseSpecTranslation(bool specTranslation)¶ Use baseline information for depth alignment from specs (design data) or from calibration. Default: true

-

void

setAlphaScaling(float alpha)¶ Free scaling parameter between 0 (when all the pixels in the undistorted image are valid) and 1 (when all the source image pixels are retained in the undistorted image). On some high distortion lenses, and/or due to rectification (image rotated) invalid areas may appear even with alpha=0, in these cases alpha < 0.0 helps removing invalid areas. See getOptimalNewCameraMatrix from opencv for more details.

Public Members

-

StereoDepthConfig

initialConfig¶ Initial config to use for StereoDepth.

-

Input

inputConfig= {*this, "inputConfig", Input::Type::SReceiver, false, 4, {{DatatypeEnum::StereoDepthConfig, false}}}¶ Input StereoDepthConfig message with ability to modify parameters in runtime. Default queue is non-blocking with size 4.

-

Input

left= {*this, "left", Input::Type::SReceiver, false, 8, true, {{DatatypeEnum::ImgFrame, true}}}¶ Input for left ImgFrame of left-right pair

Default queue is non-blocking with size 8

-

Input

right= {*this, "right", Input::Type::SReceiver, false, 8, true, {{DatatypeEnum::ImgFrame, true}}}¶ Input for right ImgFrame of left-right pair

Default queue is non-blocking with size 8

-

Output

depth= {*this, "depth", Output::Type::MSender, {{DatatypeEnum::ImgFrame, false}}}¶ Outputs ImgFrame message that carries RAW16 encoded (0..65535) depth data in depth units (millimeter by default).

Non-determined / invalid depth values are set to 0

-

Output

disparity= {*this, "disparity", Output::Type::MSender, {{DatatypeEnum::ImgFrame, false}}}¶ Outputs ImgFrame message that carries RAW8 / RAW16 encoded disparity data: RAW8 encoded (0..95) for standard mode; RAW8 encoded (0..190) for extended disparity mode; RAW16 encoded for subpixel disparity mode:

0..760 for 3 fractional bits (by default)

0..1520 for 4 fractional bits

0..3040 for 5 fractional bits

-

Output

syncedLeft= {*this, "syncedLeft", Output::Type::MSender, {{DatatypeEnum::ImgFrame, false}}}¶ Passthrough ImgFrame message from ‘left’ Input.

-

Output

syncedRight= {*this, "syncedRight", Output::Type::MSender, {{DatatypeEnum::ImgFrame, false}}}¶ Passthrough ImgFrame message from ‘right’ Input.

-

Output

rectifiedLeft= {*this, "rectifiedLeft", Output::Type::MSender, {{DatatypeEnum::ImgFrame, false}}}¶ Outputs ImgFrame message that carries RAW8 encoded (grayscale) rectified frame data.

-

Output

rectifiedRight= {*this, "rectifiedRight", Output::Type::MSender, {{DatatypeEnum::ImgFrame, false}}}¶ Outputs ImgFrame message that carries RAW8 encoded (grayscale) rectified frame data.

-

Output

outConfig= {*this, "outConfig", Output::Type::MSender, {{DatatypeEnum::StereoDepthConfig, false}}}¶ Outputs StereoDepthConfig message that contains current stereo configuration.

-

Output

debugDispLrCheckIt1= {*this, "debugDispLrCheckIt1", Output::Type::MSender, {{DatatypeEnum::ImgFrame, false}}}¶ Outputs ImgFrame message that carries left-right check first iteration (before combining with second iteration) disparity map. Useful for debugging/fine tuning.

-

Output

debugDispLrCheckIt2= {*this, "debugDispLrCheckIt2", Output::Type::MSender, {{DatatypeEnum::ImgFrame, false}}}¶ Outputs ImgFrame message that carries left-right check second iteration (before combining with first iteration) disparity map. Useful for debugging/fine tuning.

-

Output

debugExtDispLrCheckIt1= {*this, "debugExtDispLrCheckIt1", Output::Type::MSender, {{DatatypeEnum::ImgFrame, false}}}¶ Outputs ImgFrame message that carries extended left-right check first iteration (downscaled frame, before combining with second iteration) disparity map. Useful for debugging/fine tuning.

-

Output

debugExtDispLrCheckIt2= {*this, "debugExtDispLrCheckIt2", Output::Type::MSender, {{DatatypeEnum::ImgFrame, false}}}¶ Outputs ImgFrame message that carries extended left-right check second iteration (downscaled frame, before combining with first iteration) disparity map. Useful for debugging/fine tuning.

-

Output

debugDispCostDump= {*this, "debugDispCostDump", Output::Type::MSender, {{DatatypeEnum::ImgFrame, false}}}¶ Outputs ImgFrame message that carries cost dump of disparity map. Useful for debugging/fine tuning.

-

Output

confidenceMap= {*this, "confidenceMap", Output::Type::MSender, {{DatatypeEnum::ImgFrame, false}}}¶ Outputs ImgFrame message that carries RAW8 confidence map. Lower values means higher confidence of the calculated disparity value. RGB alignment, left-right check or any postproccessing (e.g. median filter) is not performed on confidence map.

Public Static Attributes

-

static constexpr const char *

NAME= "StereoDepth"¶

Private Members

-

PresetMode

presetMode= PresetMode::HIGH_DENSITY¶

-

std::shared_ptr<RawStereoDepthConfig>

rawConfig¶

-

void

Disparity¶

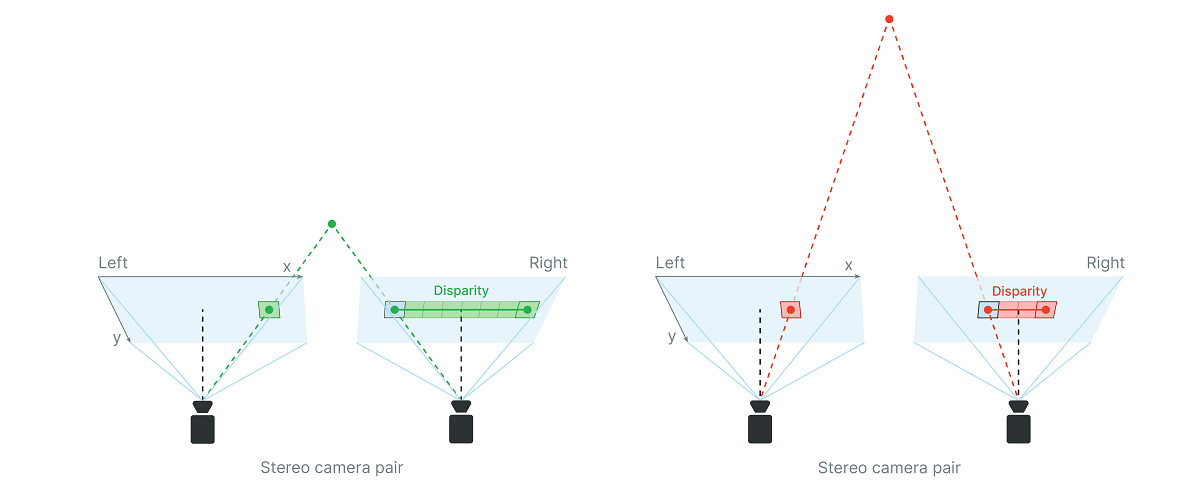

Disparity refers to the distance between two corresponding points in the left and right image of a stereo pair.

When calculating the disparity, each pixel in the disparity map gets assigned a confidence value 0..255 by the stereo matching algorithm,

as:

0- maximum confidence that it holds a valid value255- minimum confidence, so there is more chance that the value is incorrect

(this confidence score is kind-of inverted, if say comparing with NN)

For the final disparity map, a filtering is applied based on the confidence threshold value: the pixels that have their confidence score larger than

the threshold get invalidated, i.e. their disparity value is set to zero. You can set the confidence threshold with stereo.initialConfig.setConfidenceThreshold().

Calculate depth using disparity map¶

Disparity and depth are inversely related. As disparity decreases, depth increases exponentially depending on baseline and focal length. Meaning, if the disparity value is close to zero, then a small change in disparity generates a large change in depth. Similarly, if the disparity value is big, then large changes in disparity do not lead to a large change in depth.

By considering this fact, depth can be calculated using this formula:

depth = focal_length_in_pixels * baseline / disparity_in_pixels

Where baseline is the distance between two mono cameras. Note the unit used for baseline and depth is the same.

To get focal length in pixels, you can read camera calibration, as focal length in pixels is

written in camera intrinsics (intrinsics[0][0]):

import depthai as dai

with dai.Device() as device:

calibData = device.readCalibration()

intrinsics = calibData.getCameraIntrinsics(dai.CameraBoardSocket.CAM_C)

print('Right mono camera focal length in pixels:', intrinsics[0][0])

Here’s theoretical calculation of the focal length in pixels:

focal_length_in_pixels = image_width_in_pixels * 0.5 / tan(HFOV * 0.5 * PI/180)

# With 400P mono camera resolution where HFOV=71.9 degrees

focal_length_in_pixels = 640 * 0.5 / tan(71.9 * 0.5 * PI / 180) = 441.25

# With 800P mono camera resolution where HFOV=71.9 degrees

focal_length_in_pixels = 1280 * 0.5 / tan(71.9 * 0.5 * PI / 180) = 882.5

Examples for calculating the depth value, using the OAK-D (7.5cm baseline):

# For OAK-D @ 400P mono cameras and disparity of eg. 50 pixels

depth = 441.25 * 7.5 / 50 = 66.19 # cm

# For OAK-D @ 800P mono cameras and disparity of eg. 10 pixels

depth = 882.5 * 7.5 / 10 = 661.88 # cm

Note the value of disparity depth data is stored in uint16, where 0 is a special value, meaning that distance is unknown.

Min stereo depth distance¶

If the depth results for close-in objects look weird, this is likely because they are below the minimum depth-perception distance of the device.

To calculate this minimum distance, use the depth formula and choose the maximum value for disparity_in_pixels parameter (keep in mind it is inveresly related, so maximum value will yield the smallest result).

For example OAK-D has a baseline of 7.5cm, focal_length_in_pixels of 882.5 pixels and the default maximum value for disparity_in_pixels is 95. By using the depth formula we get:

min_distance = focal_length_in_pixels * baseline / disparity_in_pixels = 882.5 * 7.5cm / 95 = 69.67cm

or roughly 70cm.

However this distance can be cut in 1/2 (to around 35cm for the OAK-D) with the following options:

Changing the resolution to 640x400, instead of the standard 1280x800.

Enabling Extended Disparity.

Extended Disparity mode increases the levels of disparity to 191 from the standard 96 pixels, thereby 1/2-ing the minimum depth. It does so by computing the 96-pixel disparities on the original 1280x720 and on the downscaled 640x360 image, which are then merged to a 191-level disparity. For more information see the Extended Disparity tab in this table.

Using the previous OAK-D example, disparity_in_pixels now becomes 190 and the minimum distance is:

min_distance = focal_length_in_pixels * baseline / disparity_in_pixels = 882.5 * 7.5cm / 190 = 34.84cm

or roughly 35cm.

Note

Applying both of those options is possible, which would set the minimum depth to 1/4 of the standard settings, but at such short distances the minimum depth is limited by focal length, which is 19.6cm, since OAK-D mono cameras have fixed focus distance: 19.6cm - infinity.

See these examples for how to enable Extended Disparity.

Disparity shift to lower min depth perception¶

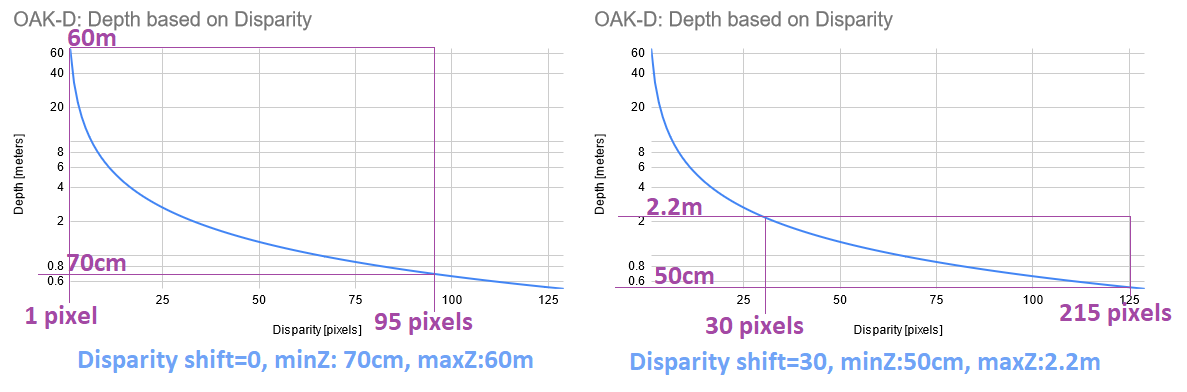

Another option to perceive closer depth range is to use disparity shift. Disparity shift will shift the starting point of the disparity search, which will significantly decrease max depth (MazZ) perception, but it will also decrease min depth (MinZ) perception. Disparity shift can be combined with extended/subpixel/LR-check modes.

Left graph shows min and max disparity and depth for OAK-D (7.5cm baseline, 800P resolution, ~70° HFOV) by default (disparity shift=0). See Calculate depth using disparity map. Since hardware (stereo block) has a fixed 95 pixel disparity search, DepthAI will search from 0 pixels (depth=INF) to 95 pixels (depth=71cm).

Right graph shows the same, but at disparity shift set to 30 pixels. This means that disparity search will be from 30 pixels (depth=2.2m) to 125 pixels (depth=50cm). This also means that depth will be very accurate at the short range (theoretically below 5mm depth error).

Limitations:

Because of the inverse relationship between disparity and depth, MaxZ will decrease much faster than MinZ as the disparity shift is increased. Therefore, it is advised not to use a larger than necessary disparity shift.

Tradeoff in reducing the MinZ this way is that objects at distances farther away than MaxZ will not be seen.

Because of the point above, we only recommend using disparity shift when MaxZ is known, such as having a depth camera mounted above a table pointing down at the table surface.

Output disparity map is not expanded, only the depth map. So if disparity shift is set to 50, and disparity value obtained is 90, the real disparity is 140.

Compared to Extended disparity, disparity shift:

(+) Is faster, as it doesn’t require an extra computation, which means there’s also no extra latency

(-) Reduces the MaxZ (significantly), while extended disparity only reduces MinZ.

Disparity shift can be combined with extended disparity.

-

StereoDepthConfig &

dai::StereoDepthConfig::setDisparityShift(int disparityShift) Shift input frame by a number of pixels to increase minimum depth. For example shifting by 48 will change effective disparity search range from (0,95] to [48,143]. An alternative approach to reducing the minZ. We normally only recommend doing this when it is known that there will be no objects farther away than MaxZ, such as having a depth camera mounted above a table pointing down at the table surface.

Max stereo depth distance¶

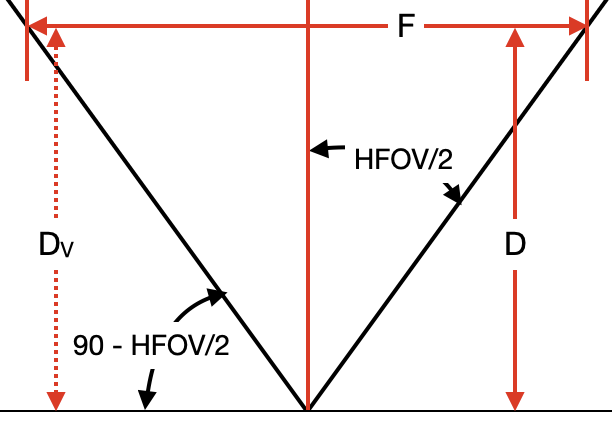

The maximum depth perception distance depends on the accuracy of the depth perception. The formula used to calculate this distance is an approximation, but is as follows:

Dm = (baseline/2) * tan((90 - HFOV / HPixels)*pi/180)

So using this formula for existing models the theoretical max distance is:

# For OAK-D (7.5cm baseline)

Dm = (7.5/2) * tan((90 - 71.9/1280)*pi/180) = 3825.03cm = 38.25 meters

# For OAK-D-CM4 (9cm baseline)

Dm = (9/2) * tan((90 - 71.9/1280)*pi/180) = 4590.04cm = 45.9 meters

If greater precision for long range measurements is required, consider enabling Subpixel Disparity or using a larger baseline distance between mono cameras. For a custom baseline, you could consider using OAK-FFC device or design your own baseboard PCB with required baseline. For more information see Subpixel Disparity under the Stereo Mode tab in this table.

Depth perception accuracy¶

Disparity depth works by matching features from one image to the other and its accuracy is based on multiple parameters:

Texture of objects / backgrounds

Backgrounds may interfere with the object detection, since backgrounds are objects too, which will make depth perception less accurate. So disparity depth works very well outdoors as there are very rarely perfectly-clean/blank surfaces there - but these are relatively commonplace indoors (in clean buildings at least).

Lighting

If the illumination is low, the diparity map will be of low confidence, which will result in a noisy depth map.

Baseline / distance to objects

Lower baseline enables us to detect the depth at a closer distance as long as the object is visible in both the frames. However, this reduces the accuracy for large distances due to less pixels representing the object and disparity decreasing towards 0 much faster. So the common norm is to adjust the baseline according to how far/close we want to be able to detect objects.

Limitation¶

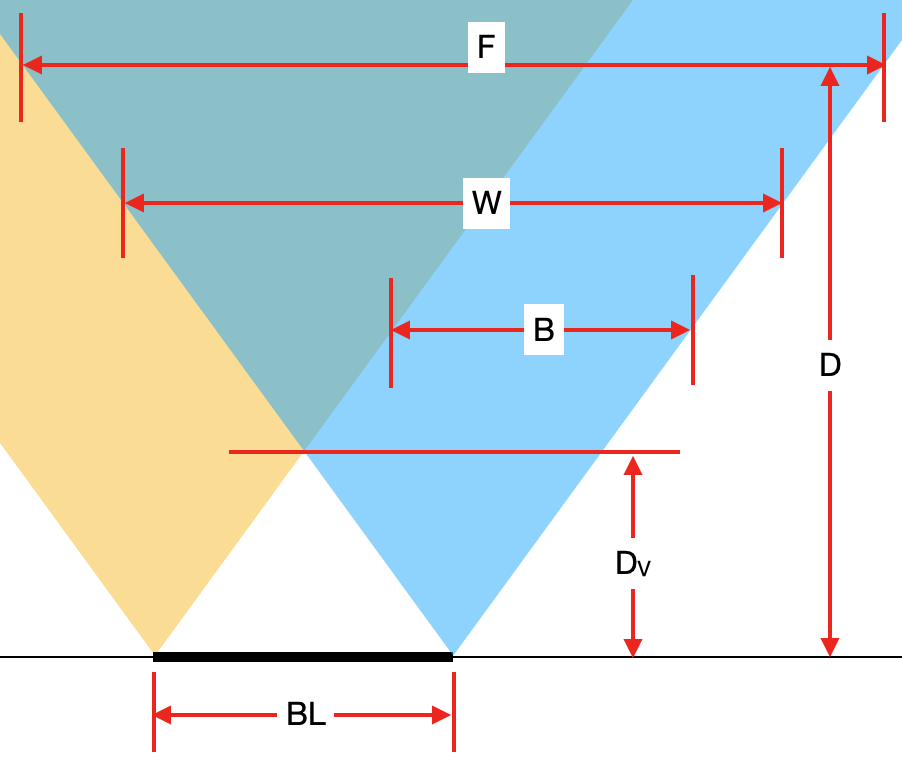

Since depth is calculated from disparity, which requires the pixels to overlap, there is inherently a vertical

band on the left side of the left mono camera and on the right side of the right mono camera, where depth

cannot be calculated, since it is seen by only 1 camera. That band is marked with B

on the following picture.

Meaning of variables on the picture:

BL [cm]- Baseline of stereo cameras.Dv [cm]- Minimum distace where both cameras see an object (thus where depth can be calculated).B [pixels]- Width of the band where depth cannot be calculated.W [pixels]- Width of mono in pixels camera or amount of horizontal pixels, also noted asHPixelsin other formulas.D [cm]- Distance from the camera plane to an object (see image here).F [cm]- Width of image at the distanceD.

With the use of the tan function, the following formulas can be obtained:

F = 2 * D * tan(HFOV/2)Dv = (BL/2) * tan(90 - HFOV/2)

In order to obtain B, we can use tan function again (same as for F), but this time

we must also multiply it by the ratio between W and F in order to convert units to pixels.

That gives the following formula:

B = 2 * Dv * tan(HFOV/2) * W / F

B = 2 * Dv * tan(HFOV/2) * W / (2 * D * tan(HFOV/2))

B = W * Dv / D # pixels

Example: If we are using OAK-D, which has a HFOV of 72°, a baseline (BL) of 7.5 cm and

640x400 (400P) resolution is used, therefore W = 640 and an object is D = 100 cm away, we can

calculate B in the following way:

Dv = 7.5 / 2 * tan(90 - 72/2) = 3.75 * tan(54°) = 5.16 cm

B = 640 * 5.16 / 100 = 33 # pixels

Credit for calculations and images goes to our community member gregflurry, which he made on this forum post.

Note

OAK-D-PRO will include both IR dot projector and IR LED, which will enable operation in no light. IR LED is used to illuminate the whole area (for mono/color frames), while IR dot projector is mostly for accurate disparity matching - to have good quality depth maps on blank surfaces as well. For outdoors, the IR laser dot projector is only relevant at night. For more information see the development progress here.

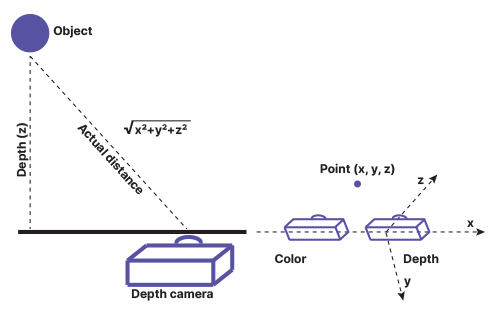

Measuring real-world object dimensions¶

Because the depth map contains the Z distance, objects in parallel with the camera are measured accurately standard. For objects not in parallel, the Euclidean distance calculation can be used. Please refer to the below:

When running eg. the RGB & MobilenetSSD with spatial data example, you could calculate the distance to the detected object from XYZ coordinates (SpatialImgDetections) using the code below (after code line 143 of the example):

distance = math.sqrt(detection.spatialCoordinates.x ** 2 + detection.spatialCoordinates.y ** 2 + detection.spatialCoordinates.z ** 2) # mm